AI At Work Denying Medicare Claims

Underlying bias or working as intended, AI-augmented program alleged to recommend denial of legitimate claims; Humana is seeking dismissal of lawsuit filed on behalf of two patients

By John P. Desmond, Editor, AI in Business

In a charge that AI was employed to deny legitimate Medicare claims, a class-action suit was filed in December that claims the health insurance company Humana used the AI model nHPredict to deny medically necessary health care for disabled and elderly patients covered under Medicare Advantage.

A month before that, another lawsuit alleged that United Healthcare also used nH Predict to reject specific claims, despite being aware that the tool was contradicting conclusions of physicians.

"Biases infiltrate AI because an algorithm is like an opinion. Biases can enter throughout the AI lifecycle — from the framing of the problem the AI is trying to solve, to product design and data collection, to development and testing. As such, risks and controls should occur at each stage of the AI lifecycle," stated Sonita Lontoh in an account from Fox Business.

A board member of several companies listed on the New York Stock Exchange and Nasdaq, Lontoh suggested that board members need a game plan for AI governance that includes collaboration with experts from inside and outside the company.

AI Model’s Predictions Called “Rigid and Unrealistic”

Another account of the suit from Louisville Public Media stated nH Predict compares information on patients within “a database of six million patients it compiled over the years” to predict how long a specific person would require care. But the suit claims the AI uses “rigid and unrealistic predictions” for patent recovery.

“Humana knows that the nH Predict AI Model predictions are highly inaccurate and are not based on patients’ medical needs but continues to use this system to deny patients’ coverage,” the suit reads. Medicare Advantage patients hospitalized for three days are usually eligible to get up to 100 days of follow-up care in a nursing home.

But the suit claims, “With the use of the nH Predict AI Model, Humana cuts off payment in a fraction of that time. Patients rarely stay in a nursing home more than 14 days before they start receiving payment denials.”

In a statement emailed to the publication, Humana spokesman Mark Taylor said nP Predict uses “augmented intelligence.” He stated, “By definition, augmented intelligence maintains a ‘human in the loop’ decision-making whenever AI is utilized. Coverage decisions are made based on the health care needs of patients, medical judgment from doctors and clinicians, and guidelines put in place by CMS (Centers for Medicare and Medicaid Services).”

The case is similar to a suit filed against UnitedHealth Group and naviHealth, the firm that originally developed the nH Predict product. Plaintiffs in both cases are represented by the Clarkson Law Firm; in the Humana case, two named plaintiffs are patients the suit maintains were improperly denied coverage.

“What we’re asking Humana to do is to follow the law by making sure that their coverage decisions are not outsourced to an AI,” stated Attorney Ryan Clarkson, the firm’s founder. “And that they’re having real medical professionals, as required under the law, make decisions about elderly patients’ care.” He said he knows of families that paid tens of thousands of dollars to get treatment that should have been paid by the insurance plan.

Humana Files to Dismiss the Case

More recently, Humana asked the federal court to dismiss the class action suit, calling the claims false and stating the two women who brought the suit need to use all their appeal options first, according to an account from McKnight’s Long-Term Care News. Humana argued that the US District Court for the Western District of Kentucky has no jurisdiction, and that the federal Centers for Medicare & Medicaid Services (CMS) has jurisdiction.

“Humana’s coverage determinations are not governed by state law. Instead, Humana is subject to ‘extensive regulations’ by CMS,” the insurer argued. “Allowing this case to continue would require the Court to apply twenty-three states’ standards, and risk outcomes that conflict with the federal government’s Medicare rules. This is exactly why the Medicare Act has such a vast preemption provision.”

Whether the health model predictor was acting exactly as designed, or whether it reflected some bias in the data it relied on, remains to be seen. In general, bias in the data that AI feeds on to power its models is a continuing concern.

"AI systems are not created in a vacuum. Their behaviors reflect the best — but also the very worst of human characteristics," stated Adnan Masood, the chief AI architect for UST, offering digital transformation solutions, and a regional director for Microsoft, in the Fox Business account. "These models are prejudiced — and it is up to us to fix them," he stated.

Efforts are underway. The US National Institute for Standards in Technology (NIST) has proposed a standard for identifying and managing bias in AI models. The US AI Accountability Act requires bias to be addressed in corporate algorithms. In Europe, the General Data Protection Regulation introduces the right to be informed of an algorithm’s output. In Singapore, the Model AI Governance Framework has a strong focus on internal governance.

"There are many more disparate examples. But algorithms operate across borders; we need global leadership on this. By providing stakeholders and policymakers with a broader perspective and necessary tools, we can stop the bigot in the machine from perpetuating its prejudice," Masood stated.

He remains optimistic that humanity can make AI work to its benefit.

Zero Risk of Bias In AI Called “Not Possible”

Some believe it is not possible to eliminate bias in data, but only to be very aware of how an AI model gets to its recommendation and that bias be managed.

The 2022 paper from NiST outlined the challenge, stating, “Current attempts for addressing the harmful effects of AI bias remain focused on computational factors such as representativeness of datasets and fairness of machine learning algorithms. These remedies are vital for mitigating bias, and more work remains.”

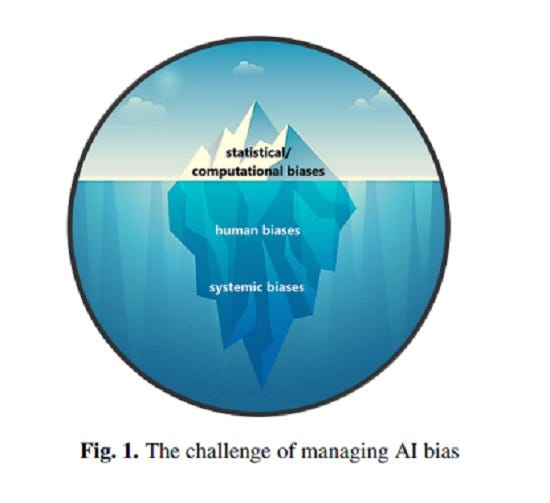

Referring to the “iceberg” image of AI, most of the challenge is hidden from view as underlying human and systemic biases. “Human and systemic institutional and societal factors are significant sources of AI bias as well, and are currently overlooked. Successfully meeting this challenge will require taking all forms of bias into account,” the authors stated.

“Bias is neither new nor unique to AI and it is not possible to achieve zero risk of bias in an AI system,” the authors stated.

Making AI more trustworthy seems to be the best that we can do.

Read the source articles and information from Fox Business, Louisville Public Media, McKnight’s Long-Term Care News, and from a proposed NIST standard.