Hyperscaler Moves Pull DeepMind Cofounder Into Microsoft

Mustafa Suleyman sees a future in which AI agents operate with autonomy to take actions, against a backdrop of concerns about how humans can control AI

By John P. Desmond, Editor, AI in Business

In a game only AI hyperscalers can play, Microsoft has hired away Mustafa Suleyman, a cofounder of Google’s DeepMind AI lab and the founder of startup Inflection AI, which had raised $1.5 billion to fund the development of a personal AI assistant.

Suleyman is now CEO of Microsoft AI and will lead the consumer AI business of Microsoft, reporting directly to Satya Nadella, Microsoft’s CEO. The Microsoft consumer AI business includes the Copilot chatbot, Bing search engine and Edge browser. Copilot was built using technology from OpenAI.

Karén Simonyan, who co-founded Inflection AI along with Suleyman and Microsoft board member Reid Hoffman, will also join Microsoft as chief scientist of Microsoft AI.

“I am excited for them to contribute their knowledge, talent and expertise to our consumer A.I. research and product making,” stated Nadella in an email to Microsoft staff quoted in The New York TImes. He added, “We have been operating with speed and intensity, and this infusion of new talent will enable us to accelerate our pace yet again.”

Inflection released its personal AI assistant Pi in April. It received accolades for its friendly nature and reached a million daily users, the Times reported.

In a blog post reported in an account from Reuters, Nadella stated, "This infusion of new talent will enable us to accelerate our pace yet again. As part of this transition, Mikhail Parakhin and his entire team, including Copilot, Bing, and Edge; and Misha Bilenko and the GenAI team will move to report to Mustafa."

Bilenko is corporate VP of GenAI for Microsoft, in the position for three years; he had worked on Azure AI cognitive services before that, according to his LinkedIn page. Before returning to Microsoft, he had worked in Moscow at Yandex, a Russian IT services company, according to an account on his personal website.

The moves come against a backdrop of heightened regulatory scrutiny of Microsoft’s ties with OpenAI. Nadella stated the company is “very committed” to its Open AI partnership and at the same time, is partnering with many other startups, the Reuters account noted.

Meanwhile, Google is expanding its AI-related efforts, announcing this week that Apple is in talks to include Google’s Gemini AI engine into the iPhone, according to the Reuters account.

Taking over as CEO of Inflection is Sean White, the former head of R&D at Mozilla. He announced plans to pivot to serving models to commercial customers instead of focusing on consumers, the Reuters account indicated.

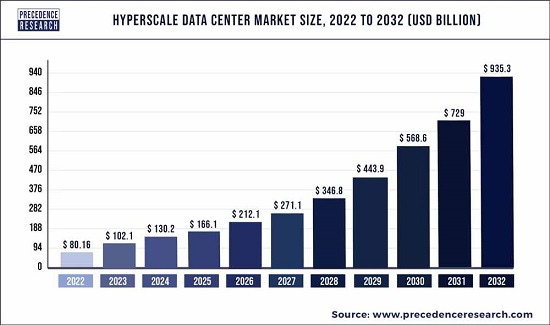

Demand for AI Apps Driving Hyperscale Data Center Growth

The overall hyperscale data center market was estimated by Precedence Research to be US $80.2 billion in 2022, expected to grow to US $953.3 billion by 2032, a growth rate of 28 percent annually. Cloud providers held 62 percent of the market in 2022.

Companies participating in the hyperscale data center market include Mellanox Technologies, HP, Cisco, Intel and IBM, according to the Precedence report. IBM for example, recently announced its Hyper-Scale Manager version 45.1, offering a unified user management interface.

AI is driving the growth of hyperscale data centers, according to an account from Gray, a global service provider based in Lexington, Kentucky, involved in engineering design, construction and real estate services including the data center market.

AI applications and deep learning models in particular, require vast amounts of computational power and storage to analyze data, according to Ben Burgett, VP of the data center market unit at Gray, Data centers are struggling to keep up with the demand.

“For instance, a single AI model can consume as much energy as five cars in their entire lifetime,” he stated. The demand for specialized chips from Nvidia and other chipmakers has surged +to meet the demand, as owners of Nvidia stock can attest.

The specialized components require adequate power and cooling to operate efficiently, contributing to increased space requirements for data centers. “As a result, hyperscale customers, such as large enterprises, are expanding their data centers to accommodate the growing AI workloads,” Burgett stated. “These expansions are driven by the need to house more servers and other AI-specific hardware.”

The Four Biggest Hyperscalers

The four biggest hyperscalers are AWS, Google Cloud, Meta and Microsoft Azure, according to a report from Structure Research issued in early 2023. A recent report in Data Center Knowledge put their capacity at that time at 13,177 megawatts (MW). In comparison, a 100 MW wind farm would generate the amount of power consumers by 35,000 homes in the Northeast and 18,000 homes in the South, where more air conditioning is used, according to an account from the Nuclear Regulatory Commission.

Hyperscalers in the US account for 77 percent of the capacity, followed by the Asia-Pacific region, where China accounts for 24 percent of capacity, then Europe, the Middle East and Africa (EMEA), followed by Latin America. The dominant hyperscalers in all regions except Latin America are Amazon, Google, Meta and Microsoft, according to the report.

In China, the leading hyperscalers are Alibaba, Huawei, Baidu, Tencent and Kingsoft Cloud, with Amazon and Apple working their way into the standings.

Suleyman Interested in AI-Infused, Action-Oriented Agents

Suleyman, in an interview published in MIT Technology Review last year, expressed an optimistic view of the impact AI will have on society. For example, he stated, “I think it’s possible to build AIs that truly reflect our best collective selves.”

And, “For me, the goal has never been anything but how to do good in the world and how to move the world forward in a healthy, satisfying way.”

When the interviewer asked him how he is able to maintain such optimism, Suleyman stated, ”I think that we are obsessed with whether you’re an optimist or whether you’re a pessimist. This is a completely biased way of looking at things.”

He maintained his team had solved issues stemming from the tendency of generative AI to sometimes spew toxic content, resolutions that make the Pi model “unbelievably controllable.” He stated, “You can’t get Pi to produce racist, homophobic, sexist—any kind of toxic stuff.”

He added, “The bottom line is, we have one of the strongest teams in the world, who have created all the largest language models of the last three or four years. Amazing people, in an extremely hardworking environment, with vast amounts of computation. We made safety our number one priority from the outset, and as a result, Pi is not so spicy as other companies’ models.”

The interviewer asked Suleyman why he was betting on generative AI. He stated in response, in part:

“The first wave of AI was about classification. Deep learning showed that we can train a computer to classify various types of input data: images, video, audio, language. Now we’re in the generative wave, where you take that input data and produce new data.

“The third wave will be the interactive phase. That’s why I’ve bet for a long time that conversation is the future interface. You know, instead of just clicking on buttons and typing, you’re going to talk to your AI.

“And these AIs will be able to take actions. You will just give it a general, high-level goal, and it will use all the tools it has to act on that. They’ll talk to other people, talk to other AIs. This is what we’re going to do with Pi.

“That’s a huge shift in what technology can do.”

Addressing Tradeoff Between Agent Autonomy and Human Control

The interviewer asked how a balance will be struck between the machine acting autonomously and the ability of humans to maintain control. Suleyman stated, “That’s a great point … The idea is that humans will always remain in command. Essentially, it’s about setting boundaries, limits that an AI can’t cross … we should figure out how independent institutions or even governments get direct access to ensure that those boundaries aren’t crossed.”

The interviewer asked who will set the boundaries and how they will be agreed upon. Suleyman stated in response, “Everybody is having a complete panic that we’re not going to be able to regulate this. It’s just nonsense. We’re totally going to be able to regulate it. We’ll apply the same frameworks that have been successful previously.”

Suleyman added, “It takes a combination of cultural pressure, institutional pressure, and, obviously, government regulation. But it makes me optimistic that we’ve done it before, and we can do it again.” He suggested regulators need to “get moving.”

Read the source articles and information in The New York TImes, from Reuters, a report from Precedence Research, an account on the website of services provider Gray, in Data Center Knowledge and in MIT Technology Review.